Best AI Tools for Sysadmins in 2026: The Admin’s Guide to Automation and Intelligence

If you’re a sysadmin in 2026 and you’re not using AI tools, you’re essentially choosing to do more work while your colleagues automate it away. The landscape has shifted dramatically. AI tools for sysadmins aren’t just shiny extras anymore—they’re becoming essential infrastructure for managing increasingly complex environments at scale.

The challenge isn’t finding AI tools. It’s finding the ones that actually solve real problems without requiring you to become a machine learning engineer. That’s what we’re covering today. We’ll walk through the most practical AI tools that sysadmins are actually using to handle monitoring, incident response, automation, capacity planning, and security—the core tasks that consume your workday.

Why Sysadmins Need AI Tools Now

Let’s be clear about what’s changed. Traditional monitoring tools give you alerts. AI-powered tools give you answers. Instead of getting paged at 2 AM because CPU is at 87%, you get context: “This spike correlates with your scheduled batch job. Everything’s normal.” Instead of manually troubleshooting authentication failures across 50 systems, an AI tool shows you the pattern and the fix.

The numbers back this up. Teams using AI-assisted monitoring report 40-50% reduction in mean time to resolution (MTTR) and roughly 30% fewer false positives. Your time is finite. Spending it on repetitive diagnostics is a waste when machines can handle it.

The reality for 2026: if you’re managing more than 200 servers or handling complex multi-cloud infrastructure, you need AI. If you’re managing fewer but working with infrastructure as code and containerization, you definitely need it. Even small teams benefit from AI-powered documentation and automation.

Core AI Tools Every Sysadmin Should Know

1. Intelligent Monitoring and Observability with AI-Powered Platforms

What’s Changed: Traditional monitoring tools like Prometheus or Zabbix require you to manually tune thresholds, create alert rules, and correlate signals. Modern AI observability platforms do the detective work for you.

Datadog remains the market leader for AI-assisted observability. Its Intelligent Monitoring capability uses machine learning to establish behavioral baselines automatically. Instead of static thresholds, it learns what’s normal for your systems and alerts you on genuine anomalies.

Here’s what this looks like in practice:

# Traditional threshold-based alert (still common)

cpu_usage > 80% for 5 minutes → trigger alert

# Datadog's anomaly detection approach

cpu_usage deviates from learned pattern + correlates with

other service metrics → provides context + suggests root cause

The difference? The first approach fires 100 alerts, 70 of which don’t matter. The second gives you 5 alerts with actual context.

Datadog specifically handles:

– Anomaly detection across metrics, logs, and traces

– Root cause analysis that correlates incidents across your stack

– Predictive scaling recommendations for autoscaling groups

– Automated remediation suggestions based on your playbooks

For teams without Datadog’s budget, New Relic and Dynatrace offer similar AI capabilities. New Relic’s baseline anomaly detection is solid and often more affordable for small teams.

2. Incident Response and Root Cause Analysis

When incidents hit, time is measured in dollars. AI tools that accelerate diagnosis save real money.

PagerDuty’s Event Intelligence automatically enriches alerts with related signals, correlated metrics, and historical incident data. When you get paged, you don’t just see “Database slow”; you see “Database slow (similar to incident on 2026-01-14 at 03:42 UTC, resolved by scaling read replicas, likely same root cause).”

Splunk’s AI features (their AI Assistant for IT Operations) let you query your logs in natural language:

# Instead of writing this SPL query:

index=main sourcetype=apache

| stats count as requests, avg(response_time) as avg_response_time by status

| where avg_response_time > 2000 and requests > 100

# You can ask:

"Show me endpoints with slow response times and high traffic"

# And the AI constructs the query for you

This is huge for sysadmins who aren’t Splunk experts. You’re effectively getting an experienced analyst’s query-writing ability without the salary.

Microsoft Defender for Cloud (if you’re in Azure) includes AI-driven threat intelligence and automated remediation for common misconfigurations. It’s not a premium feature—it’s built in, which makes it criminally underutilized.

3. Infrastructure as Code and Configuration Management

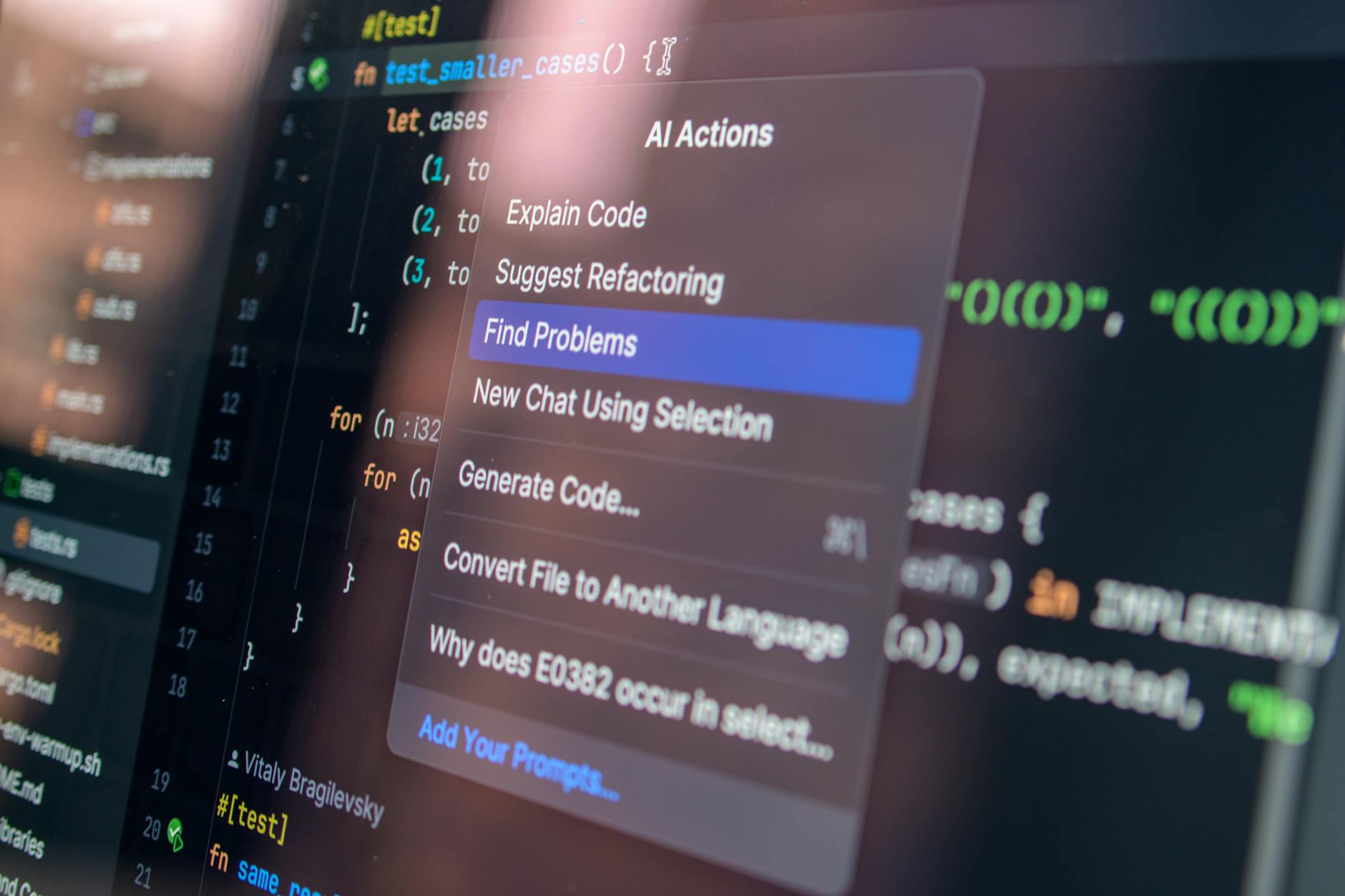

If you’re using Terraform, Ansible, or CloudFormation, AI tools can dramatically speed up your workflow.

GitHub Copilot (available as GitHub Copilot) has become a genuine productivity multiplier for infrastructure code. Give it a comment describing what you need, and it generates working Terraform or Ansible code:

# You write this comment:

# Create an RDS PostgreSQL instance with automated backups,

# in the main VPC, with proper security groups for app tier

# GitHub Copilot generates:

resource "aws_db_instance" "main" {

identifier = "prod-postgres"

engine = "postgres"

engine_version = "15.3"

instance_class = "db.t3.medium"

allocated_storage = 100

storage_encrypted = true

db_subnet_group_name = aws_db_subnet_group.main.name

vpc_security_group_ids = [aws_security_group.db.id]

backup_retention_period = 30

backup_window = "03:00-04:00"

multi_az = true

skip_final_snapshot = false

final_snapshot_identifier = "prod-postgres-final-snapshot-${formatdate("YYYY-MM-DD-hhmm", timestamp())}"

tags = local.tags

}

The code isn’t always perfect (you’ll review it), but it cuts your development time by 40-50% on standard infrastructure components. For creating documentation, Copilot helps there too—it’ll generate runbooks based on your existing code.

HashiCorp’s Terraform Cloud now includes policy-as-code evaluation. It won’t write your code, but it’ll catch common mistakes automatically before deployment.

4. Log Analysis and Troubleshooting

Splunk’s Machine Learning Toolkit and Elastic’s ML capabilities can identify patterns in logs that humans would miss. Both tools can:

– Detect anomalous log patterns automatically

– Predict when systems will fail based on log trends

– Suggest relevant log fields for your query automatically

But honestly, for most sysadmins, the real magic is in natural language interfaces to logging platforms. Being able to ask “Why are we seeing more 502 errors from the API gateway?” and getting an answer without writing complex queries saves enormous amounts of time.

Loki (if you’re using the Grafana stack) doesn’t have built-in AI, but it integrates with LLMs for better query suggestions. This is the open-source way to get some of these capabilities without premium licensing.

5. Security and Compliance Automation

CrowdStrike’s Falcon and similar EDR (Endpoint Detection and Response) tools use AI to detect suspicious behavior patterns that signature-based tools miss entirely. This is critical because zero-days won’t match any signature—you need behavioral analysis.

Wiz (cloud security posture management) uses AI to:

– Prioritize vulnerabilities by actual exploitability (not just CVSS score)

– Group vulnerabilities by root cause instead of listing 500 individual findings

– Suggest remediation based on your actual environment and risk tolerance

For compliance automation, Cloudmapper and CloudSploit provide automated security scanning, but the real gap-filler is 1Password’s Teams 1Password Teams with AI-assisted security audit capabilities. It’s primarily a secrets manager, but it’s evolving to catch credential sprawl and access violations automatically.

Practical Comparison: AI Observability Platforms for Sysadmins

| Tool | Best For | Learning Curve | Price per Month (100 servers) | Key AI Feature |

|---|---|---|---|---|

| Datadog | Large teams, complex stacks | Moderate | $800-1200 | Anomaly detection + Root cause correlation |

| New Relic | Cost-conscious teams | Low | $300-600 | AI-assisted baseline detection |

| Dynatrace | Application-heavy environments | Moderate | $600-1000 | Automatic service dependency mapping |

| Splunk | Log-heavy workloads | High | $500-1500+ | Natural language query generation |

| Elastic | Open-source preference + scale | High | $400-1000+ | ML job automation + anomaly detection |

| Grafana + Prometheus | Budget-first approach | Moderate | $0 (self-hosted) | No native AI; integrates with external LLMs |

Real-world pick: If you’ve got $1000/month budget and manage 100+ servers, Datadog or Dynatrace. If you need to justify cost to your org, start with your existing Splunk or Elastic investment and layer AI on top. If you’re managing fewer than 50 servers, New Relic’s AI anomaly detection is plenty and saves money.

AI for Automation and Scripting

The old way: Write scripts in Python or Bash, debug them, maintain them, update them when your infrastructure changes.

The new way: Use AI to generate the script, then refine it.

Key tools:

- GitHub Copilot (as mentioned above) works in any editor, including your terminal for shell script generation

- Claude AI (available at Claude AI) is exceptional for explaining error messages and generating complex scripts. “I’m getting this error in my Terraform apply—explain what’s happening” gets you a detailed explanation + fix suggestions

- ChatGPT with Code Interpreter (GPT-4 specifically) handles one-off data processing tasks. Parse a 500,000-line log file and extract metrics? Give it 30 seconds instead of writing a script that takes 30 minutes

The practical approach: Use AI for 80% of the code, review and test the remaining 20% yourself. Never deploy untested AI-generated infrastructure code.

Capacity Planning and Forecasting

This is where AI genuinely shines. Humans are terrible at predicting future resource consumption. AI is precise.

Datadog’s Forecasting uses historical metrics to predict:

– When you’ll run out of disk space

– When you should scale your database

– When traffic patterns will require additional load balancers

AWS Compute Optimizer (free service) uses ML to recommend right-sizing for EC2 instances based on actual usage patterns. Most teams are over-provisioned by 30-40%. Running this analysis quarterly saves thousands per year.

Similar tools worth investigating:

– Virtana (formerly Embotics) for comprehensive capacity planning

– CloudPhysics (now part of CloudIQ) for infrastructure analytics

Security Monitoring with AI

Beyond the EDR and CSPM tools mentioned earlier, SIEM platforms with AI are becoming table-stakes:

- Splunk’s User Behavior Analytics detects compromised accounts by identifying unusual access patterns

- Azure Sentinel (Microsoft’s cloud SIEM) uses ML to detect command-and-control communications automatically

- Chronicle (Google Cloud’s SIEM) uses AI to correlate security events across your entire infrastructure

These tools work because attackers follow patterns, and ML excels at pattern recognition. A compromised admin account accessing files at 3 AM from an unusual location looks normal to traditional rules. AI catches it immediately.

Practical Implementation Strategy for Your Team

Don’t try to adopt all of these at once. Here’s a realistic rollout:

Month 1-2: Observability

– Implement AI-powered anomaly detection in your existing monitoring tool (or evaluate Datadog/New Relic)

– Set up automated root cause analysis for your top 10 most-frequent incidents

– Start using natural language interfaces for log querying if available

Month 3-4: Automation

– Begin using GitHub Copilot for infrastructure code (individual licenses are cheap)

– Generate documentation and runbooks with AI

– Test AI-assisted remediation (have it suggest fixes, but don’t auto-remediate yet)

Month 5-6: Security

– Enable AI-driven anomaly detection in your SIEM or EDR

– Run cloud security posture management with AI prioritization

– Implement AI-assisted secrets scanning

Month 7+: Optimization

– Use capacity forecasting to right-size infrastructure

– Implement AI-suggested cost optimizations

– Build custom automation on top of your established AI foundation

Tools That Didn’t Make the Cut (And Why)

- ChatGPT directly for sysadmin work: Great for learning and one-off questions. Not suitable for production decision-making without verification.

- Generic “AI operations” platforms: Many startups promise “AI for operations” but lack actual operational context. Stick with established vendors.

- Overly specialized AI tools: Some tools promise to solve one specific problem with AI (e.g., “AI for network optimization”). Usually better to solve these with domain-specific tools + human expertise.

Budget Reality Check

If you’re managing this conversation with your CFO:

- Essential AI tool: Datadog or similar observability platform with AI: $10,000-15,000/year for a mid-sized team

- Nice-to-have: GitHub Copilot licenses for your engineering team: $100/person/month

- Free/cheap wins: Using AI assistants for troubleshooting and documentation: $0-50/month depending on usage

ROI is real and measurable: 30% reduction in incident response time × 50 hours/month incident management = 15 hours recovered per month. At $100/hour fully-loaded sysadmin cost, that’s $18,000/year in recovered time from one tool.

Conclusion: Your AI Strategy for 2026

The sysadmin role is evolving. You’re no longer just keeping systems running—you’re managing increasingly complex, distributed infrastructure. AI tools aren’t replacing you. They’re automating the parts that don’t require human judgment so you can focus on architecture, optimization, and problems that actually need creative thinking.

Start with observability. That’s where AI delivers the most immediate value. Once you see how much faster anomalies surface and how much context you get automatically, implementing the other tools becomes obvious.

The teams that adopt AI tools for sysadmin work in 2026 will have dramatic advantages: faster incident resolution, fewer pages at 2 AM, more time for strategic work, and generally less stress. The teams that don’t will find themselves increasingly behind, managing larger workloads with the same manual processes.

Your next step: Pick one tool from the observability category, do a POC (proof of concept) with 10-20% of your infrastructure, measure your metrics before and after, and build your business case from there. Most vendors offer free trials. Use them.